It is seven in the evening—a time once reserved for family dinners, homework, or the decompression of a long school day. For Brittani Phillips, a middle school counselor in Putnam County, Florida, it is the time her phone begins to buzz with the digital anxieties of her students. The alert does not come from a direct text message or a frantic phone call from a parent, but rather from an algorithmic sentinel: an artificial intelligence-enabled therapy platform. This system, now embedded in the infrastructure of hundreds of schools, operates during the quiet hours when school doors are locked, scanning student interactions for linguistic markers of distress. When a student types a phrase that the software deems a risk for self-harm or violence, the machine escalates the conversation to a human handler. Phillips checks her screen, assessing the severity of the flag, bridging the gap between a silicon calculation and a child in crisis.

This scenario is becoming increasingly commonplace across the global education landscape in 2026. As the mental health crisis among adolescents continues to strain traditional support systems, educational institutions are turning to technology to fill the void. These automated monitoring tools, often marketed as a safety net for vulnerable youth, represent a profound shift in how schools manage student well-being. The premise is seductive in its simplicity: an always-on, judgment-free listener that can identify danger signs faster than any overworked human counselor ever could. However, as adoption rates soar, a complex debate is emerging regarding the efficacy, safety, and ethical implications of outsourcing emotional triage to software.

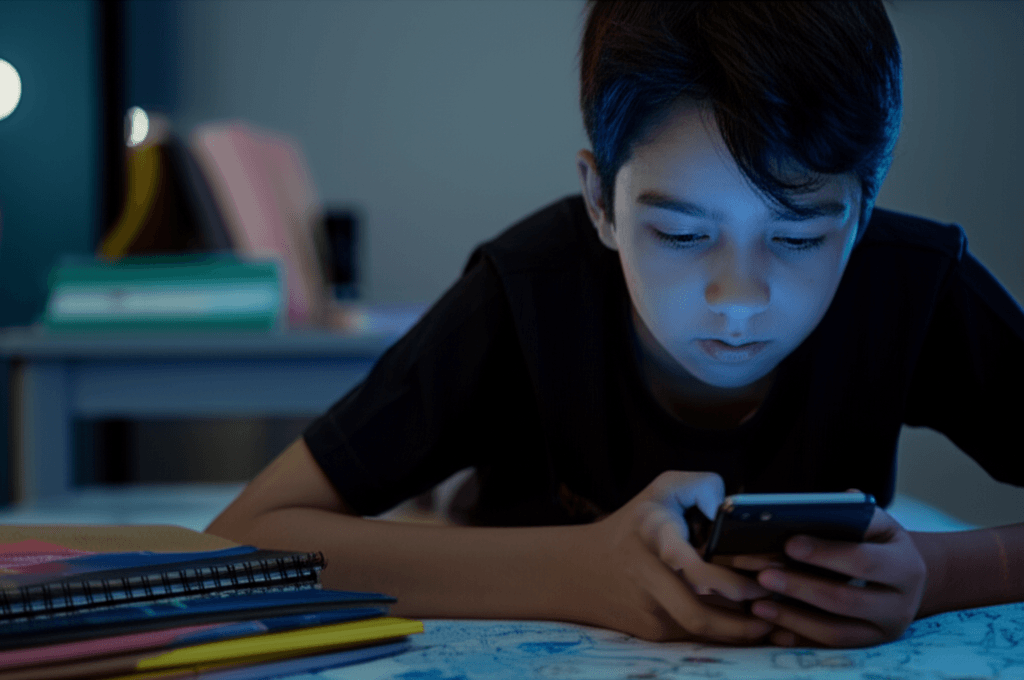

One of the most striking findings reported by educators and developers alike is the comfort level students exhibit when interacting with these non-human entities. In an era defined by digital nativity, many adolescents report finding it 'more natural' to confide in a chatbot than to sit across from an adult in a sterile office. The phenomenon, often referred to in psychological circles as the 'Eliza effect,' suggests that users attribute human-like empathy to computer programs, often feeling safer because the machine cannot judge, gossip, or express disappointment. For a teenager grappling with sensitive issues—ranging from gender identity to suicidal ideation—the anonymity of a text box can feel like a sanctuary. The AI does not furrow its brow; it simply processes, responds, and, if necessary, alerts.

However, the mechanism behind this digital confessional is rooted in surveillance. To function effectively, these systems must constantly monitor input, scanning for keywords and sentiment patterns. This raises significant privacy concerns that extend far beyond the classroom. Critics argue that normalizing this level of monitoring conditions young people to accept constant surveillance as a prerequisite for care. There is also the question of data ownership and the potential for false positives. An innocuous comment made in frustration could trigger a cascade of interventions, involving parents, school administrators, and potentially law enforcement, permanently altering a student's record and reputation based on an algorithmic interpretation of teenage angst.

Despite these concerns, the driving force behind the adoption of AI counselors is a desperate lack of resources. Globally, the ratio of students to qualified mental health professionals remains dangerously high. in many regions, a single counselor may be responsible for the well-being of hundreds, if not thousands, of students. In this context, AI is not viewed as a replacement for human connection, but as a necessary tourniquet. School administrators argue that they cannot afford to ignore tools that might prevent a tragedy. If a piece of software can catch a cry for help at 2:00 AM—a time when no human staff member is watching—then the privacy trade-off is deemed acceptable by many decision-makers.

The implementation of these systems also fundamentally changes the role of the human counselor. Professionals like Phillips are no longer just therapists; they are becoming rapid-response dispatchers. The integration of AI into their workflow means that their workday technically never ends. The psychological toll on educators, who must remain vigilant for after-hours alerts, is a growing concern. The boundary between professional duty and personal time erodes when a phone notification could mean the difference between life and death. This 'always-on' culture of care may lead to higher rates of burnout among the very professionals the AI is supposed to assist, creating a paradox where the technology intended to alleviate the burden on the system actually intensifies the pressure on its human components.

Furthermore, the efficacy of these tools remains a subject of intense scrutiny. While they excel at pattern recognition, AI models lack the nuance of human intuition. A machine may flag a sarcastic comment as a threat while missing the subtle, non-verbal cues of a student who is silently suffering. The reliance on text-based analysis ignores the complexity of human emotion, reducing mental health to a series of data points. There is a risk that schools may become over-reliant on these alerts, viewing the absence of a digital flag as confirmation of a student's well-being, thereby missing those who do not engage with the platform or who have learned to navigate around the trigger words.

The global expansion of this technology also raises questions about cultural competency. Mental health expression varies wildly across different cultures and languages. An AI trained primarily on data from Western demographics may misinterpret or fail to recognize distress signals from students of different cultural backgrounds. As these tools are exported to international markets, the potential for algorithmic bias becomes a critical issue. Without rigorous, localized training data, these platforms could inadvertently marginalize the very students they aim to protect, creating a two-tiered system of safety where only those who fit the model's training profile are accurately monitored.

As we move further into this decade, the presence of AI in the realm of school psychology appears inevitable. The question is no longer whether schools will use these tools, but how they will govern them. The challenge lies in establishing robust ethical frameworks that protect student privacy while leveraging the benefits of rapid intervention. Transparency is paramount; students and parents must understand exactly what is being monitored, how the data is stored, and who has access to it. Moreover, there must be a clear understanding that AI is a tool for triage, not treatment. It can identify a wound, but it cannot heal it.

Ultimately, the rise of the AI counselor forces a societal reckoning with how we prioritize youth mental health. The reliance on algorithms is a symptom of a broader systemic failure to provide adequate human support. While technology can offer a lifeline in the dark hours of the night, it cannot replace the transformative power of human empathy. As schools navigate this brave new world, they must ensure that in their quest to prevent harm, they do not dismantle the privacy and trust that form the foundation of a healthy educational environment. The goal must remain the cultivation of resilient, supported human beings, not merely the successful management of data risks.

Support our research into the intersection of technology and youth well-being by donating to the Nivaran Foundation today.

Support this work